Computational Vision Topics - Anna Regina Corbo - corbo@impa.br

This project reproduces the algorithm proposed by [Criminisi, Perez & Toyama, 2003] for removing large objects from digital images, in a way that the result looks quite reasonable for our eyes.

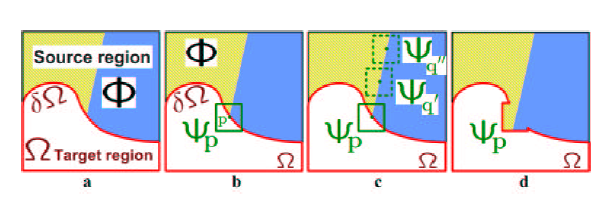

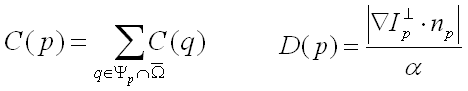

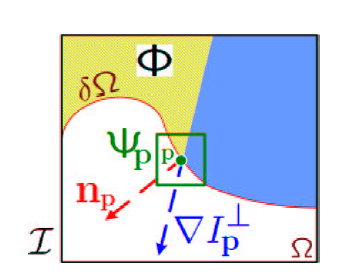

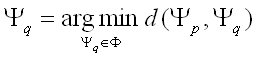

Here, the inpainting process is done by an algorithm that fill the target area with linear structures sampled in the source region. This propagation by exemplar-based texture synthesis is done choosing the best-match sample from the source region, i.e., which one is most similar to those parts that are already filled in the patch to be filled. For example, if the patch P lies on the continuation of an image edge, the most likely best matches will lie along the same (or a similarly coloured) edge. Figure 1 illustrates this point.

![]()

![]()